A project exhibited in The HYBRID CITY II: Subtle rEvolutions

(part of Stavros Didakis‘ PhD research in i-DAT, Plymouth University, fully funded by Onassis Foundation)

In this project, computational media and sensor technologies are used to measure, analyze, and control aspects of the domestic environment. Reading the measurable world from macro to micro, a large number of possibilities may create unexpected, flexible, and personalized spaces that enhance living qualities of inhabitants, providing added layers of information, affectivity, and aesthetics with the use of calm technologies and ubiquitous computing. Fundamental consideration in this case is to construct sensate spaces that may establish the domestication of computational media with prior interest to elevate aspects of the inhabitants’ well-being, such as mood, emotion, experience, and perception.

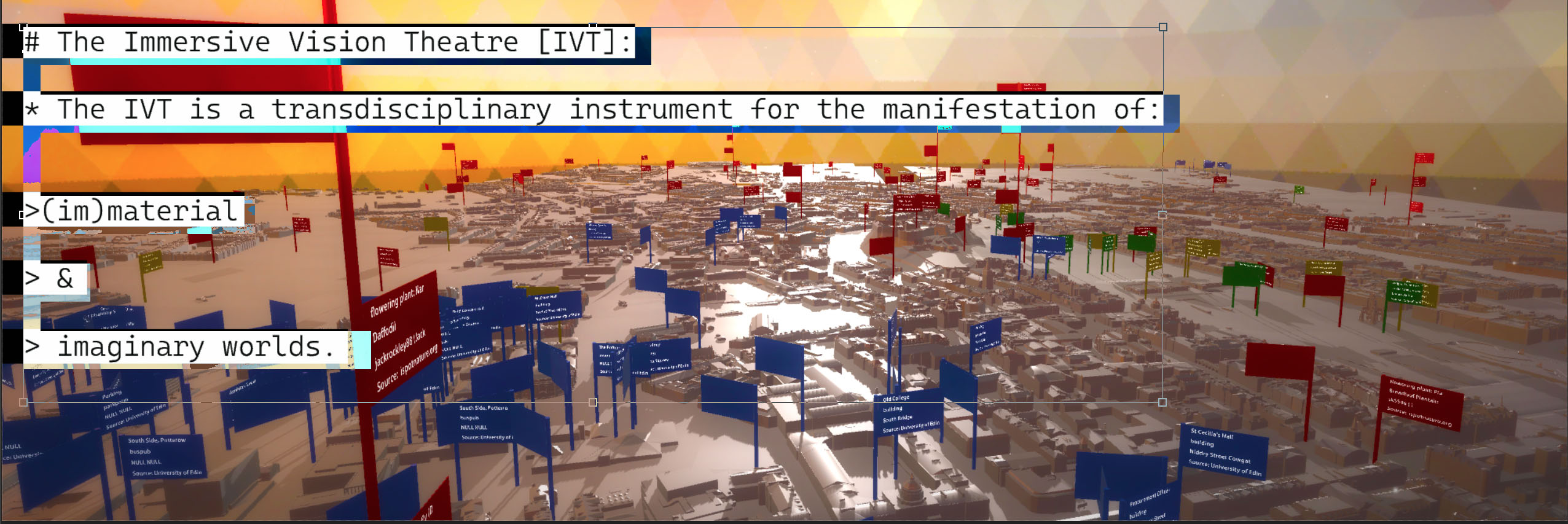

Environmental conditions, spatial information, circulation, virtual and physical navigation, social media, or biosensors can collectively define quantitative or qualitative information that is used to properly adjust and personalize each environment and closely match taste and preferences. With the development of middleware applications it becomes even more feasible to approach this goal, providing necessary tools to create links between incoming data and outgoing processes, establish important automations, or suggest new creative and imaginative interactions. Therefore, it is possible to instantly create connections between an isolated sensor reading and projected visualizations, or use a number of similar sensors to control the overall interior lighting. Extracting specific keywords from social media messages or using sentiment analysis methods to define mood and emotion, it becomes possible to directly configure properties of a personal space as a multi-layered canvas. The final result of the configured space can provide a single pixel in the larger screen of the Hybrid City so as the overall well-being may be mirrored, provoke self-consciousness, and define a cartography of lifestyles and living conditions.

Middleware application to link input information to media devices (light, audio, music, visuals, etc)

Realtime simulation in Unity (hardware under development)

Upload final results in Google Maps to provide instant visualization of multiple sources / homes

You must be logged in to post a comment.