Small-Faraway:

Big, little and smaller data (Macro-Meso-Micro). Small-Faraway explores the harvesting, analytics, visualisation and sonification of data. This research investigates techniques to capture data from an instrumentalised world, such as Atomic Force Microscopy, remote sensors and the MET Office. It uses software and hardware, such as game engine technology, to create real-time models for display them in a variety of formats, such as Fulldome, VR, mobile phones and bespoke devices.

Some example projects…

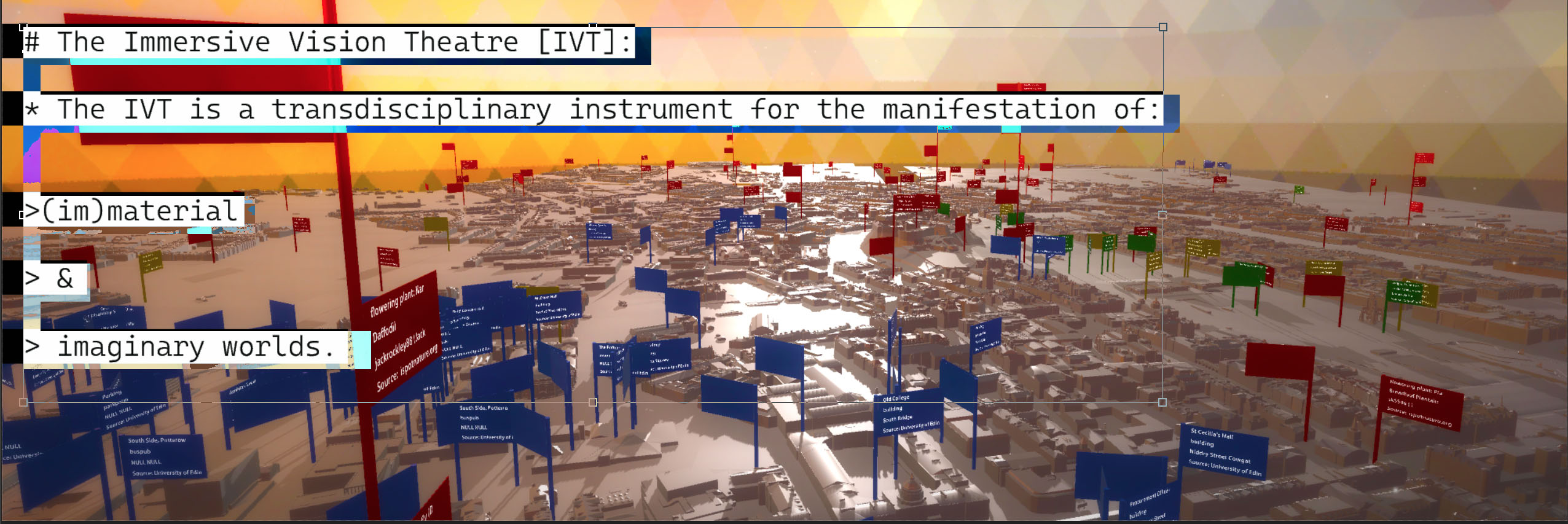

Much of i-DAT’s visualisation and simulation work (in VR/AR and Shared VR) takes place through the The Immersive Vision Theatre (IVT). The IVT is a transdisciplinary instrument for the manifestation of material and imaginary worlds. Plymouth University’s William Day Planetarium (built in 1967) has been reborn as a 40 seat Immersive Vision Theatre (IVT). The IVT is used for a range of learning, entertainment and research activities, including transdisciplinary teaching, bleeding edge research in modelling and data visualisation. We can fly you to the edge of the observable Universe, across microscopic nano-landscapes, or immerse you in interactive data-scapes.

i-DAT has collaborated with the Wolfson Nanomaterials & Devices Laboratory (part of the University of Plymouths Nanotechnology research activity) on several projects. This includes the development of fulldome ‘Nanoscapes’ created by measuring atomic forces at a nano scale using their Atomic Force Microscope (AFM). These forces generate a data-set which can be translated into a height map and subsequently into a fulldome corrected landscape.

QUORUMSCAPE integrates the Quorum algorithmic tools into a real-time geo-located CityScape simulation. An alpha version was release for the Mediacity (http://mediacity.i-dat.org/) conference and SAT ix Symposium (http://ix.sat.qc.ca/node/359?language=en), with further upgrades for the the Tate Modern Turbine Hall Festival (http://i-dat.org/tate-modern-data-jam-250715/) and the Big Buzz Plymouth event (http://bit.ly/1WJFTBQ) and Cairotronica. A stable distribution with mobile/Samsung Gear VR was commissioned by Design Informatics/Edinburgh University for the Edinburgh Cityscope Project.

With thanks to the MRI Department, Derriford Hospital, Plymouth Hospitals NHS Trust for the full body MRI Scan. The process involves the translation of Dicom images into a volumetric model which then becomes a navigable immersive architecture within Unity 3D (using Phage mobile interactive devices).

A Mote it is… is constructed from data captured by an AFM (Atomic Force Microscope) from a ‘mote’ or piece of dust extracted from the artist’s eye. The whirlwind of data projected within the gallery is rendered invisible by the gaze of the viewer. The more we look the more invisible it becomes – look away and it re-emerges from the maelstrom of data. A ghost of the mote can be seen in viewers peripheral vision but never head on. – if you see what I mean?

This volumetric rendering of a Drosophila, or common fruit fly is composed of 600 slices at 6 μm digitized through a scanning technique developed at the University of Vienna. Converted to a format readable by 3D visualisation software such as 3DS Max this visualisation was output as a 3D video projection to be experienced in a dome environment. Because of the procedural structure of the 3D model it is possible for a viewer to interact with the image exploring the inner bodily cavities of the fly.

You must be logged in to post a comment.